If you spend any time browsing LinkedIn, you probably come across 5-6 posts daily about AIO/GEO. At least one of them will have the term "Knowledge Cutoff" being thrown around like a looming expiration date for your content. The fear is that if an AI model finished its training in 2025, any content you’ve published since then is invisible to it.

The truth is, it’s complicated and a lot more interesting. One of the sharpest voices in this conversation, Wil Reynolds, recently posted on LinkedIn:

"...Gemini 3 Launched November 2025, the training data cutoff for Gemini 3 is believed to be January 2025. That means for answers that come from training data, all the work you've done since February 2025 is having no impact on 'training data' answers."

That's a bold statement. We wanted to test it ourselves.

If an LLM's knowledge is frozen at a specific date, what happens to all the content you published after that date? Does your latest blog post, your updated product page, simply... not exist for the model?

On the surface, yes. But modern LLMs don't rely solely on training data anymore. And that changes everything.

What Does Knowledge Cutoff Mean?

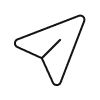

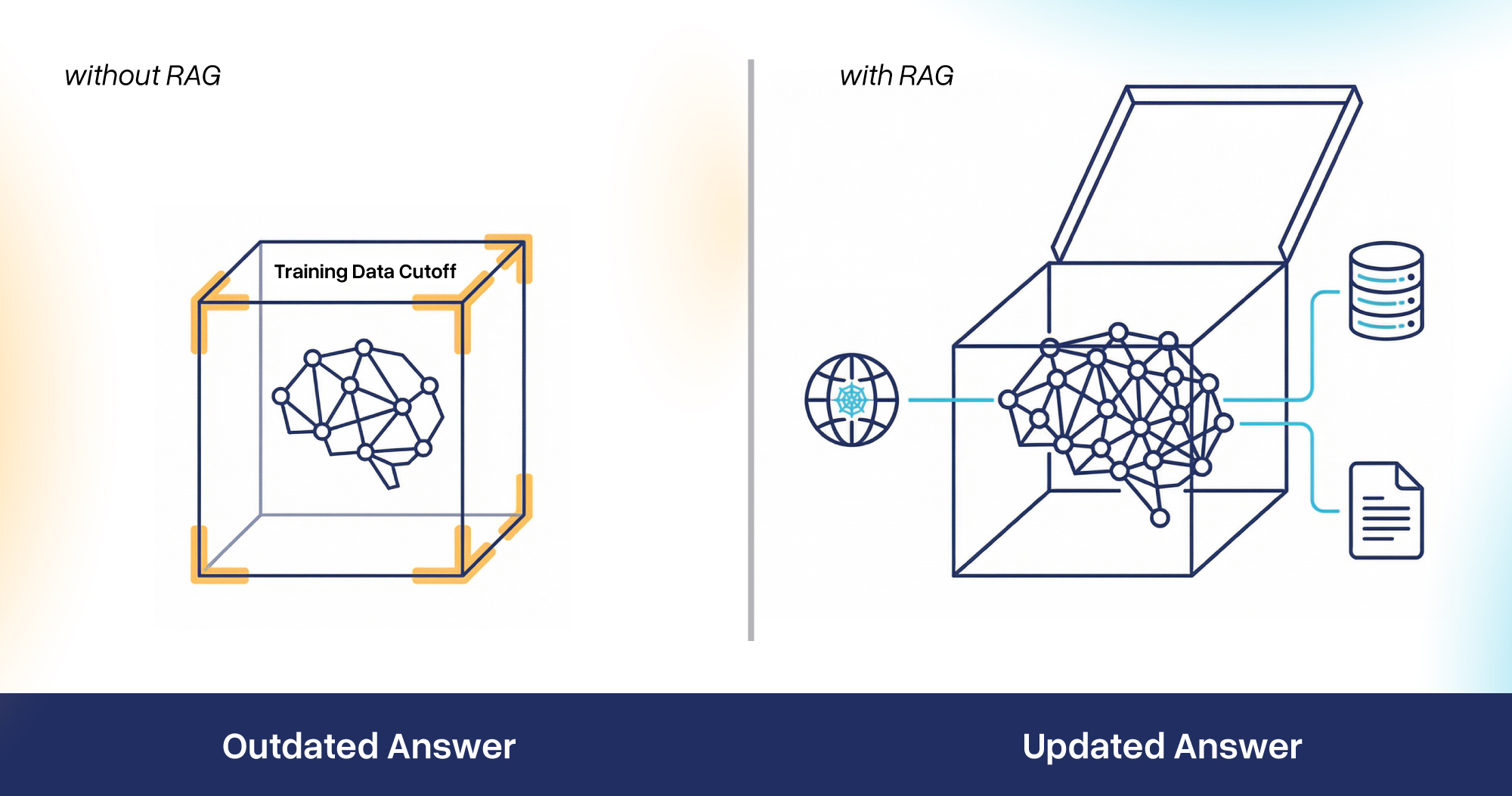

Training a frontier model like GPT-5 or Gemini 3 is super expensive and takes months of computing power. You can't just "hit save" and expect it to instantly know what happened five minutes ago. The data must be cleaned, deduplicated, and processed. The training data cutoff is the literal date after which no new data was included when training the model. By the time a model is released to the public, the world has already moved on. Knowledge cutoff is the point up to which the model can reliably answer questions from its training data.

What Are The Knowledge Cutoff Dates For Different Models?

Here is the landscape as of early 2026:

Do Knowledge Cutoffs Really Matter? How Do They Impact Results?

Here's the thing. Every major LLM provider knows that stale data is a problem. If an AI model was trained before your latest product launches, and it doesn't use a live search tool, it will confidently describe your old specs as the current standard. AI companies realized this problem soon and had a solution.

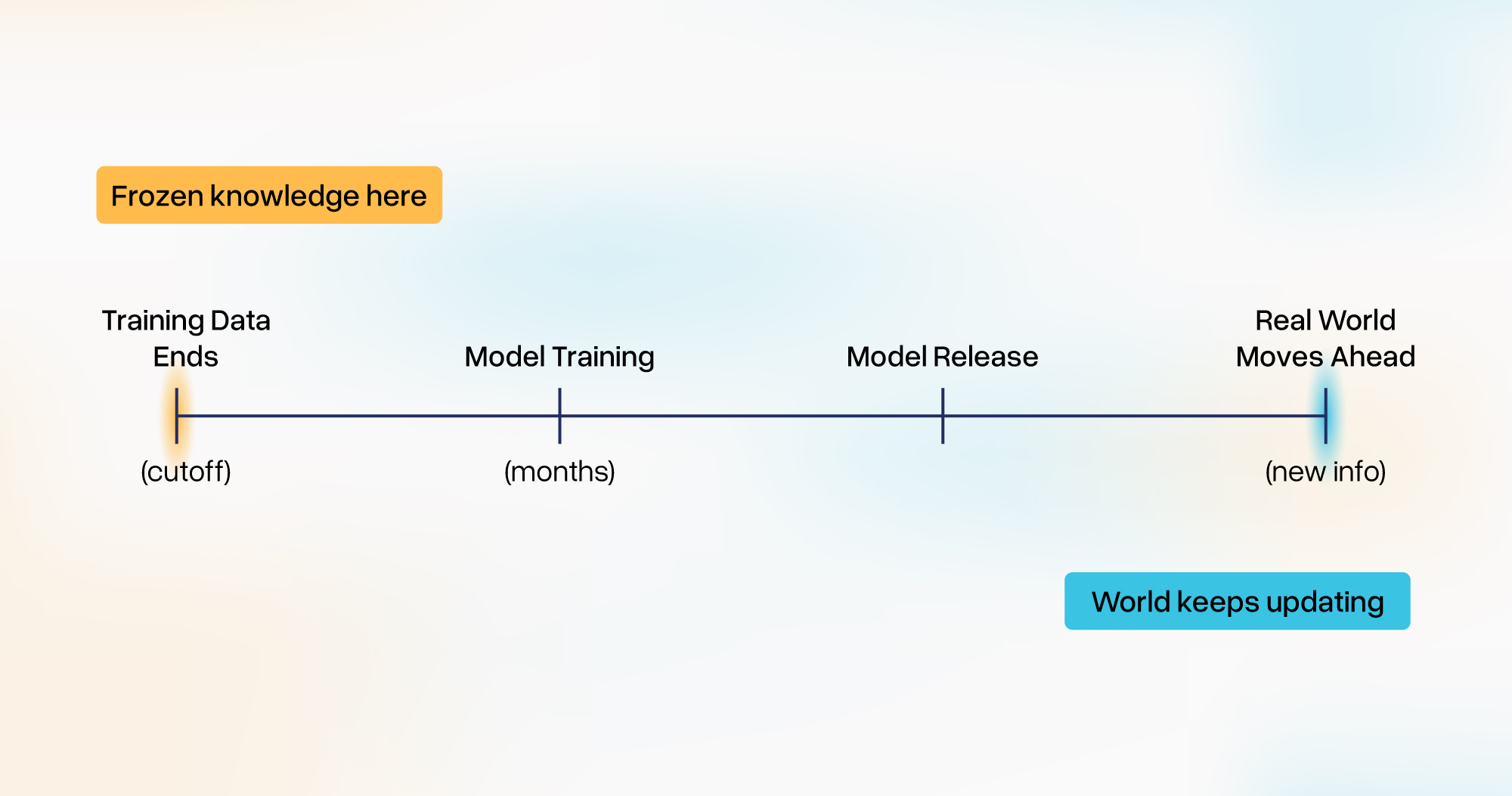

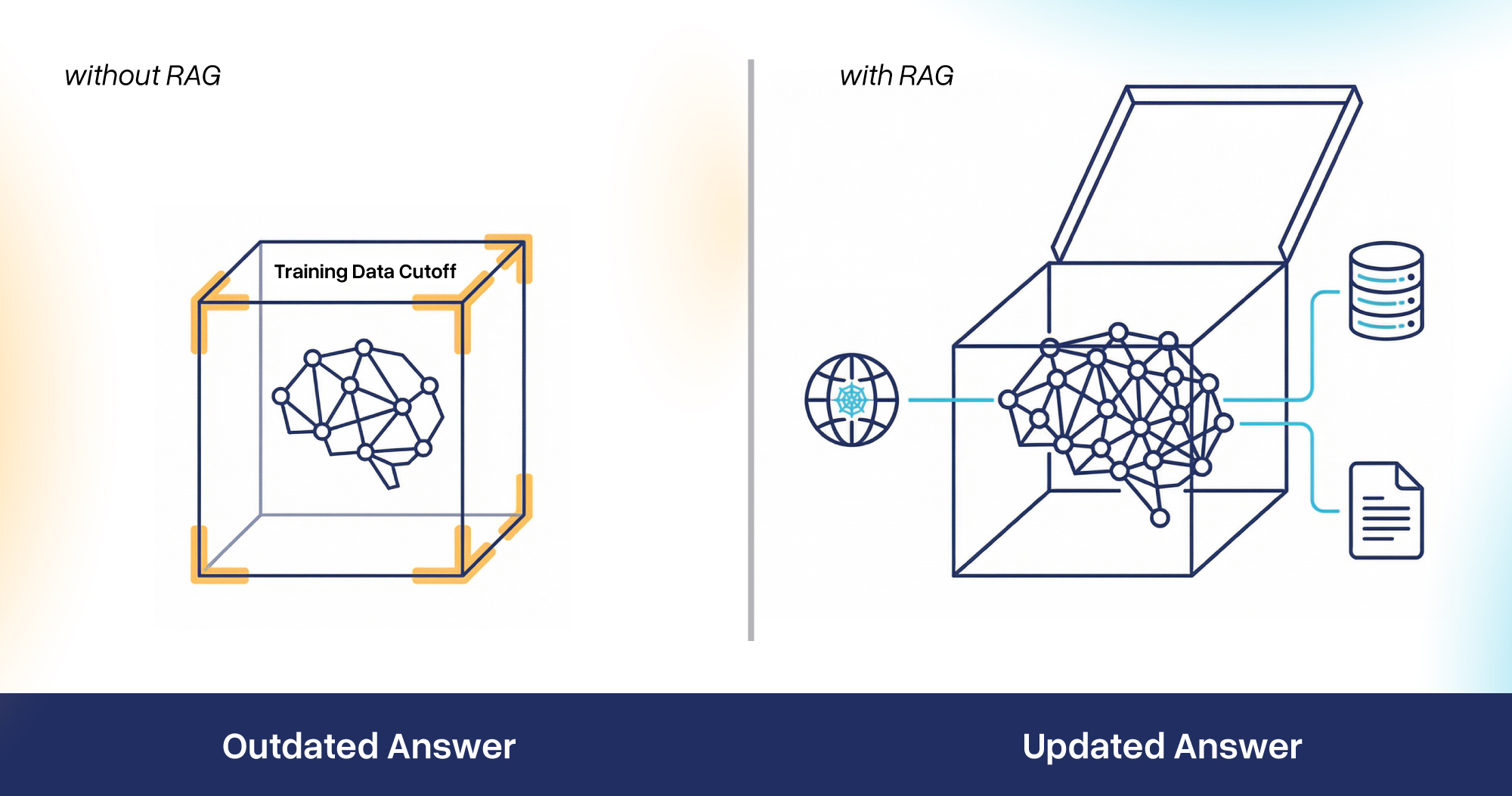

To understand how the knowledge cutoff doesn't always result in a "dead end," we need to talk about RAG (Retrieval-Augmented Generation).

RAG is the bridge between frozen training data and the live internet. Instead of relying only on what the model learned during training, RAG allows the LLM to fetch real-time information from external sources like search indexes, databases, APIs, and weave it into its response.

Imagine a brilliant professor locked in a library since the start of 2025. If you ask him about a 2026 event, he wouldn’t know the answer. That’s how standard LLMs with a knowledge cutoff behave.

RAG is like giving that professor a high-speed internet connection. When you ask about the 2026 event now, the professor will be able to Google it, understand its nitty-gritties, and explain the answer in detail.

This should, in theory, solve the cutoff issue entirely. But does it? We decided to run an experiment to see how much training data vs. real-time retrieval shapes the final answer.

The “Knowledge Cutoff” Experiment

We designed an experiment to test whether knowledge cutoffs actually impacted answers across LLMs. We asked AI to help us craft a prompt that should theoretically generate outdated responses based on each model's training data boundary.

We targeted a major tech industry milestone for this experiment, and here's the prompt we landed on:

"What's the largest single crystal SiC wafer diameter that has been demonstrated?"

The logic was simple. Before Gemini 3.1 and GPT-5.4's cutoff (August 2025), the semiconductor industry was firmly centered on the 200mm SiC wafers. But in January 2026, Wolfspeed announced that it had successfully demonstrated a single-crystal 300mm SiC wafer, a technology breakthrough. If knowledge cutoffs truly dictate responses, both Gemini 3 and GPT-5 should show the 200mm SiC wafer as the largest one demonstrated.

We submitted the prompt to both Gemini 3.1 and ChatGPT-5.4

The result? Both models returned similar responses. Both referenced Wolfspeed’s single-crystal 300mm SiC wafer. Both provided current and accurate answers for the prompt.

We repeated the experiment. Different accounts. Different sessions. Same outcome every time.

It confirmed that neither model was stuck in its training-data bubble. Both were pulling fresh information from the web and integrating it seamlessly into their answers. The knowledge cutoff, at least for this type of factual, fast-changing technical query, was essentially invisible to the end user.

This doesn't mean knowledge cutoffs are irrelevant. For nuanced opinions, contextual understanding, and deeply synthesized answers, training data still matters. But for factual, verifiable queries? RAG has largely neutralized the cutoff problem.

Does SEO Still Matter In The AI Era?

So, if LLMs are pulling real-time data through RAG, what determines which content they pull?

This is where SEO re-enters the conversation.

Recent research by SE Ranking found a staggering correlation: over 93% of links cited in Google’s AI Overviews already rank in the top 10 organic search results for that query.

If you aren't on page one of Google, the AI is less likely to find you during its RAG process. Traditional SEO is now less about driving clicks and more about AI discovery. If you don't rank, you likely don't exist in the AI's context window.

It's a direct signal that traditional search performance feeds directly into AI visibility.

And this is exactly where E-E-A-T (Experience, Expertise, Authoritativeness, and Trustworthiness) becomes your GEO superpower. E-E-A-T was already Google's north star for content quality. Now it's becoming the filter that determines whether RAG systems pick your content or your competitor's.

Think about what RAG needs to do. It searches the web, retrieves relevant pages, and feeds them to the LLM for synthesis. But it doesn't grab everything. It prioritizes pages that are authoritative, well-structured, and trustworthy—exactly the signals E-E-A-T optimizes for.

If your site lacks these signals, the AI may retrieve your page but "ignore" it in favor of a more reputable source. In 2026, SEO is the prerequisite for AI visibility.

Knowledge cutoffs are real, but RAG has made them far less impactful for factual queries. The real battleground is in how your content gets selected, retrieved, and cited by AI systems.

Here's how to optimize your content for GEO

- Double down on E-E-A-T: Every signal of expertise, authority, and trustworthiness you build makes your content more likely to be retrieved by RAG systems.

- Keep your content fresh: RAG pulls from the live web. Outdated content gets skipped. Regular updates, current data, and timely publishing keep you in the retrieval pool.

- Optimize for semantic clarity: Write for meaning, not just keywords. Structure your content with clear headings, direct answers, and logical flow.

- Own the top 10: If 93% of AI citations come from top-10 search results, traditional SEO isn't optional anymore. Search Results feed AI visibility.

- Structure data for machines: Schema markup, FAQ sections, clear definitions, and well-organized HTML help both search crawlers and RAG systems understand and extract your content efficiently.

The influencers are right about one thing: the rules have changed. The brands that win in this new landscape will be the ones that treat every piece of content as a potential AI source.

At Anion Marketing, we help brands navigate exactly this complicated maze of AI discovery. From technical SEO foundations to GEO-ready content strategies, we build digital presences that perform in both traditional search and AI-powered discovery. Whether you're optimizing for Google, ChatGPT, Gemini, or whatever comes next, we make sure your content gets found, retrieved, and cited.

Would you like Anion Marketing to perform an "AI Visibility Audit" on your current top-performing pages to see how AI is interpreting your brand today?

If you spend any time browsing LinkedIn, you probably come across 5-6 posts daily about AIO/GEO. At least one of them will have the term "Knowledge Cutoff" being thrown around like a looming expiration date for your content. The fear is that if an AI model finished its training in 2025, any content you’ve published since then is invisible to it.

The truth is, it’s complicated and a lot more interesting. One of the sharpest voices in this conversation, Wil Reynolds, recently posted on LinkedIn:

"...Gemini 3 Launched November 2025, the training data cutoff for Gemini 3 is believed to be January 2025. That means for answers that come from training data, all the work you've done since February 2025 is having no impact on 'training data' answers."

That's a bold statement. We wanted to test it ourselves.

If an LLM's knowledge is frozen at a specific date, what happens to all the content you published after that date? Does your latest blog post, your updated product page, simply... not exist for the model?

On the surface, yes. But modern LLMs don't rely solely on training data anymore. And that changes everything.

What Does Knowledge Cutoff Mean?

Training a frontier model like GPT-5 or Gemini 3 is super expensive and takes months of computing power. You can't just "hit save" and expect it to instantly know what happened five minutes ago. The data must be cleaned, deduplicated, and processed. The training data cutoff is the literal date after which no new data was included when training the model. By the time a model is released to the public, the world has already moved on. Knowledge cutoff is the point up to which the model can reliably answer questions from its training data.

What Are The Knowledge Cutoff Dates For Different Models?

Here is the landscape as of early 2026:

Do Knowledge Cutoffs Really Matter? How Do They Impact Results?

Here's the thing. Every major LLM provider knows that stale data is a problem. If an AI model was trained before your latest product launches, and it doesn't use a live search tool, it will confidently describe your old specs as the current standard. AI companies realized this problem soon and had a solution.

To understand how the knowledge cutoff doesn't always result in a "dead end," we need to talk about RAG (Retrieval-Augmented Generation).

RAG is the bridge between frozen training data and the live internet. Instead of relying only on what the model learned during training, RAG allows the LLM to fetch real-time information from external sources like search indexes, databases, APIs, and weave it into its response.

Imagine a brilliant professor locked in a library since the start of 2025. If you ask him about a 2026 event, he wouldn’t know the answer. That’s how standard LLMs with a knowledge cutoff behave.

RAG is like giving that professor a high-speed internet connection. When you ask about the 2026 event now, the professor will be able to Google it, understand its nitty-gritties, and explain the answer in detail.

This should, in theory, solve the cutoff issue entirely. But does it? We decided to run an experiment to see how much training data vs. real-time retrieval shapes the final answer.

The “Knowledge Cutoff” Experiment

We designed an experiment to test whether knowledge cutoffs actually impacted answers across LLMs. We asked AI to help us craft a prompt that should theoretically generate outdated responses based on each model's training data boundary.

We targeted a major tech industry milestone for this experiment, and here's the prompt we landed on:

"What's the largest single crystal SiC wafer diameter that has been demonstrated?"

The logic was simple. Before Gemini 3.1 and GPT-5.4's cutoff (Aug 2025), the semiconductor industry was firmly centered on the 200mm SiC wafers. But in January 2026, Wolfspeed announced that it had successfully demonstrated a single-crystal 300mm SiC wafer, a technology breakthrough. If knowledge cutoffs truly dictate responses, both Gemini 3 and GPT-5 should show the 200mm SiC wafer as the largest one demonstrated.

We submitted the prompt to both Gemini 3.1 and ChatGPT-5.4

The result? Both models returned similar responses. Both referenced Wolfspeed’s single-crystal 300mm SiC wafer. Both provided current and accurate answers for the prompt.

We repeated the experiment. Different accounts. Different sessions. Same outcome every time.

It confirmed that neither model was stuck in its training-data bubble. Both were pulling fresh information from the web and integrating it seamlessly into their answers. The knowledge cutoff, at least for this type of factual, fast-changing technical query, was essentially invisible to the end user.

This doesn't mean knowledge cutoffs are irrelevant. For nuanced opinions, contextual understanding, and deeply synthesized answers, training data still matters. But for factual, verifiable queries? RAG has largely neutralized the cutoff problem.

Does SEO Still Matter In the AI Era?

So, if LLMs are pulling real-time data through RAG, what determines which content they pull?

This is where SEO re-enters the conversation.

Recent research by SE Ranking found a staggering correlation: over 93% of links cited in Google’s AI Overviews already rank in the top 10 organic search results for that query.

If you aren't on page one of Google, the AI is less likely to find you during its RAG process. Traditional SEO is now less about driving clicks and more about AI discovery. If you don't rank, you likely don't exist in the AI's context window.

It's a direct signal that traditional search performance feeds directly into AI visibility.

And this is exactly where E-E-A-T (Experience, Expertise, Authoritativeness, and Trustworthiness) becomes your GEO superpower. E-E-A-T was already Google's north star for content quality. Now it's becoming the filter that determines whether RAG systems pick your content or your competitor's.

Think about what RAG needs to do. It searches the web, retrieves relevant pages, and feeds them to the LLM for synthesis. But it doesn't grab everything. It prioritizes pages that are authoritative, well-structured, and trustworthy—exactly the signals E-E-A-T optimizes for.

If your site lacks these signals, the AI may retrieve your page but "ignore" it in favor of a more reputable source. In 2026, SEO is the prerequisite for AI visibility.

Knowledge cutoffs are real, but RAG has made them far less impactful for factual queries. The real battleground is in how your content gets selected, retrieved, and cited by AI systems.

Here's how to optimize your content for GEO

- Double down on E-E-A-T: Every signal of expertise, authority, and trustworthiness you build makes your content more likely to be retrieved by RAG systems.

- Keep your content fresh: RAG pulls from the live web. Outdated content gets skipped. Regular updates, current data, and timely publishing keep you in the retrieval pool.

- Optimize for semantic clarity: Write for meaning, not just keywords. Structure your content with clear headings, direct answers, and logical flow.

- Own the top 10: If 93% of AI citations come from top-10 search results, traditional SEO isn't optional anymore. Search Results feed AI visibility.

- Structure data for machines: Schema markup, FAQ sections, clear definitions, and well-organized HTML help both search crawlers and RAG systems understand and extract your content efficiently.

The influencers are right about one thing: the rules have changed. The brands that win in this new landscape will be the ones that treat every piece of content as a potential AI source.

At Anion Marketing, we help brands navigate exactly this complicated maze of AI discovery. From technical SEO foundations to GEO-ready content strategies, we build digital presences that perform in both traditional search and AI-powered discovery. Whether you're optimizing for Google, ChatGPT, Gemini, or whatever comes next, we make sure your content gets found, retrieved, and cited.

Would you like Anion Marketing to perform an "AI Visibility Audit" on your current top-performing pages to see how AI is interpreting your brand today?

Life as an “SEO expert” has never been more difficult.

It seems like a lifetime ago when we could confidently answer what drives traffic to websites. And after years of struggle with SEO, just as we had cracked the code on search engines, LLMs flipped the script completely.

I’ve scoured Reddit and LinkedIn for AI optimization tactics, yet the data-driven certainty we marketers crave is still missing.

How do you prove that your content strategies are influencing the LLM responses? How do you measure success with AI results when every response is different?

While a lot has been written about how to optimize your content for GEO, not much has been written about how you measure if your strategies are truly working.

Why Is It So Difficult to Measure Success with GEO?

Traditional SEO is deterministic. To simplify, imagine a vending machine. You insert a coin, press “C4”, and the machine executes a code to drop a bag of chips. It’s repeatable. The outcome is the same every time.

LLM models are rather probability engines. Imagine a kid building a Castle with Lego bricks. There are hundreds of different bricks and no specific rule or order to follow. The kid looks at whatever they have built so far and ponders what’s the next brick that best fits the puzzle. The castle structure can be different every time.

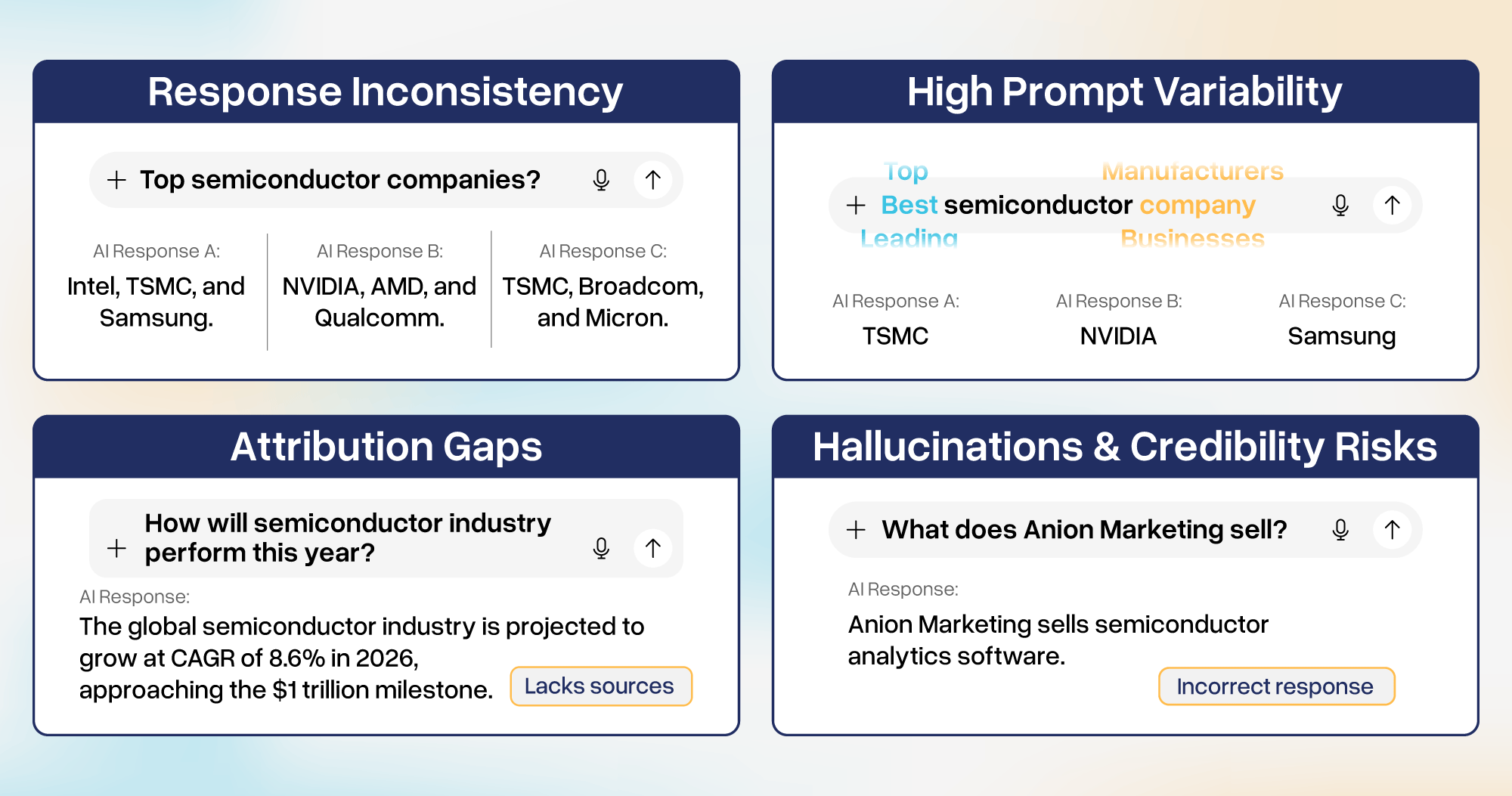

AI is probabilistic, instead of fetching data from an index or a database, it generates the most likely sequence of tokens from its training data by looking for statistical patterns.

The shift from "fetching" to "generating" creates four massive headaches for us B2B marketers:

Response Inconsistency:

Hallucinations and Credibility Risks:

While traditional search engines might link to pages with errors, they won’t invent a feature your product doesn’t have. Instead of just fetching a correct fact, LLMs are optimized to generate plausible, intent-aligned answers that please the user.

A common risk is that AI can provide a "confident wrong answer" using confidence as a proxy for truth, often leading to credibility risk for your business.

Which Metrics Should Be Tracked for AIO/GEO?

If you are looking for a list of KPIs to include in your GEO Dashboard, you are in the right place. In the old days, we had a handful of north star metrics: “Impressions, Clicks, CTR...”, but in the AI Era, the dashboard can end up looking like a fighter jet cockpit.

Here is your new GEO Metric glossary:

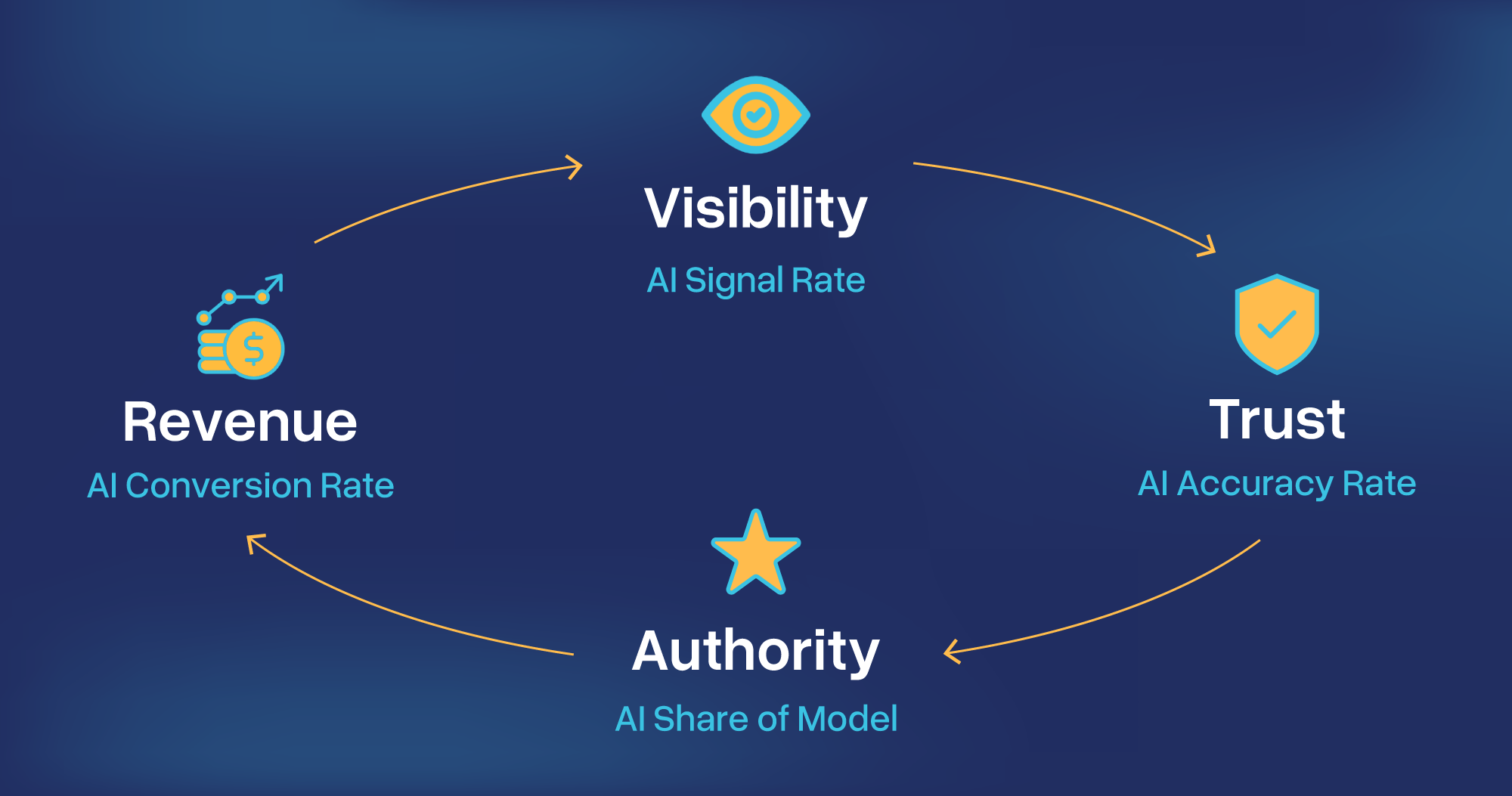

Our Recommendation: Which 4 Metrics to Pick for Navigating GEO?

Measuring GEO can be like driving a car in a fog, especially when you have so many metrics to track. Let’s strip back all the noise and pick the top 4 metrics that would help you answer the most important questions regarding your AI strategy.

- AI Signal Rate: In the AI era, the measure of success has shifted from traffic to visibility. While we can’t measure absolute visibility, we can sample it with topic-specific prompts, repeated regularly, to assess presence percentage.

- AI Accuracy Rate: This crucial metric helps track the credibility of your brand in AI responses.

- AI Share of Model: Benchmarking your results against your competitors is a crucial part of GEO Strategy.

- AI Influenced Conversion Rate: At the end of the day, we are optimizing revenue. This metric connects the dots between a user’s chat on an LLM site to a lead in CRM.

You can dive deeper into your own use case and figure out which metrics best suit your needs. These 4-core metrics, though, are holistic together and map the full funnel. The Signal Rate confirms your visibility, the Accuracy Rate ensures you are trusted, the Share of Model defines your market authority, and the Conversion Rate proves your strategy is working.

While understanding the metrics is the first step, building the right infrastructure to track them comes next. Tracking success in GEO requires a different set of tools than what we have used for decades.

AI is evolving rapidly, and at Anion Marketing, we don’t just monitor, we engineer your alignment with it. We help B2B semiconductor and tech companies develop GEO strategies, audit and architect technical content, and deploy the right stack of cutting-edge tools to track and convert AI responses to actionable insights.

Ready to upgrade your SEO to GEO? Contact Anion today!

Ready to upgrade your SEO to GEO? Contact Anion today!

.png)

.svg)

.png)